Imagine trying to build a skyscraper, but the bricks are kept in a warehouse ten miles away, and you only have one small pickup truck to move them. No matter how fast your bricklayers work, the entire project is held hostage by the speed of that truck.

In the world of Agentic AI, we are currently living in that logistical nightmare. We’ve built “bricklayers” (LLMs) that can think at the speed of light, but they run on an architectural foundation laid by John von Neumann in 1945. This is the Von Neumann Tax, and it is the single greatest invisible cost in your AI infrastructure.

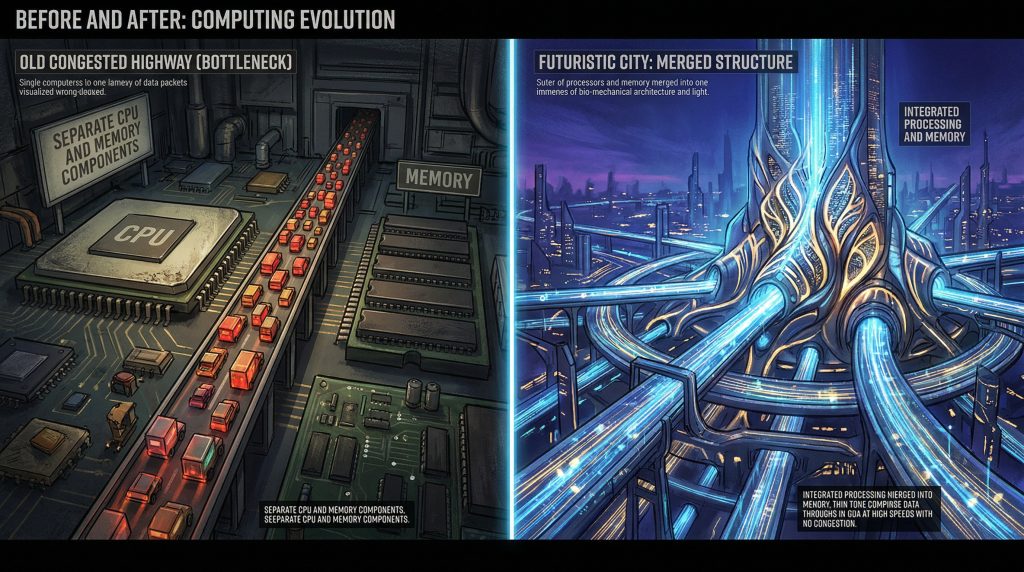

The Why: The Invisible Speed Limit

When I worked on the 8085, the “traffic” was manageable. You moved a byte from memory to a register, performed an operation, and moved it back. But today’s Agentic AI doesn’t move bytes; it moves billions of parameters.

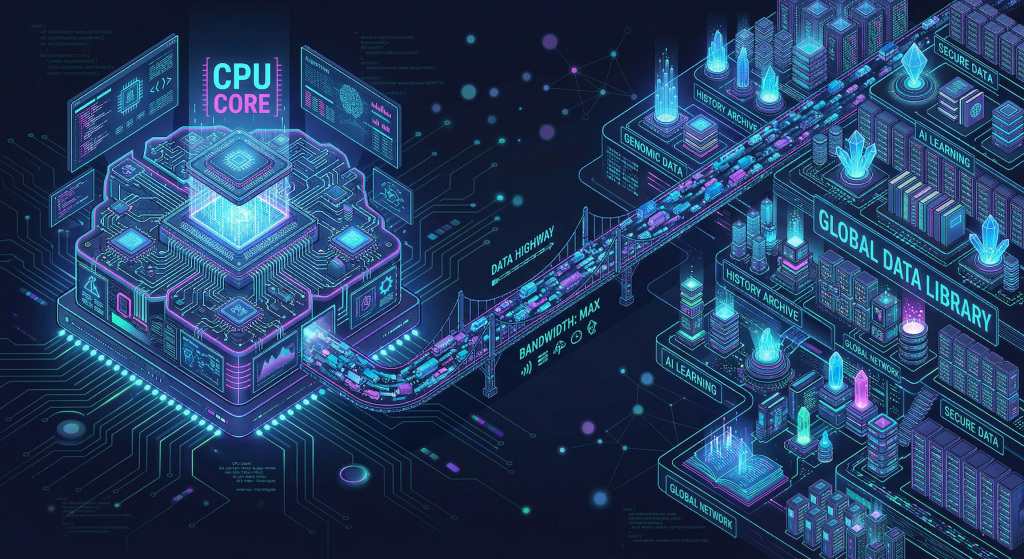

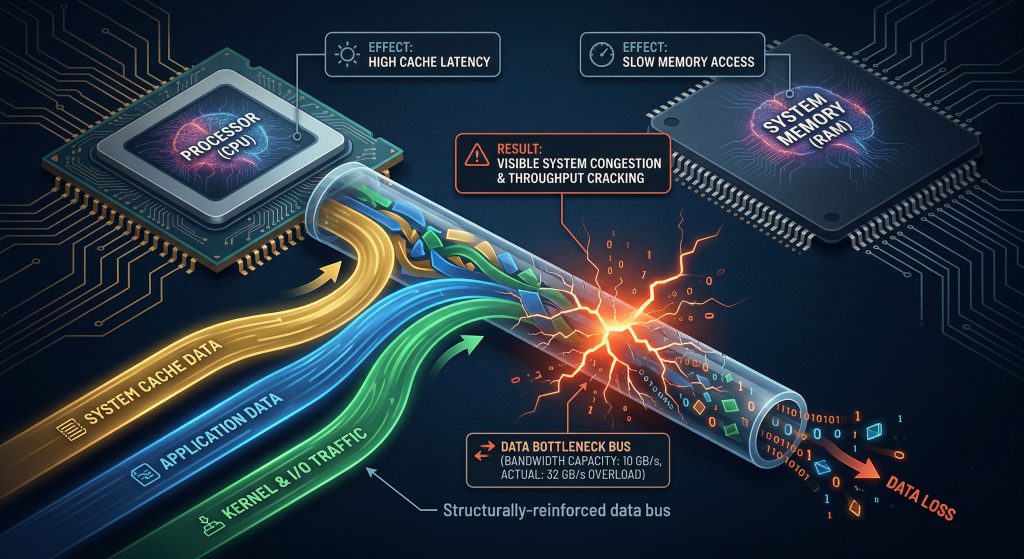

The Von Neumann Architecture (VNA) fundamentally separates the Processor (the brain) from the Memory (the library). They are connected by a single “bus”—the pickup truck in our analogy. In the Generative era, this creates the Von Neumann Bottleneck. Every time an AI agent “thinks,” it has to fetch massive model weights and conversation context across that narrow bridge.

Von Neumann’s Narrow Bridge

We are spending 90% of our energy and time simply moving data back and forth, while the processor sits idle, tapping its fingers, waiting for the bricks to arrive.

The What: Mapping the Agent to the Machine

Why does this matter for your “Vibe Coding” or your autonomous agents? Because the VNA model directly constraints the “Reasoning” we are trying to achieve:

The Three Lanes of Traffic

- The Weights (Knowledge): Billions of parameters stored in main memory. The sheer volume makes every query a high-latency event.

- The Context (Short-term Memory): Your “Chain-of-Thought” and “Scratchpads” live in the working memory. As your agent’s reasoning becomes more complex, the “traffic jam” on the bus gets exponentially worse.

- Tool-Use (RAG): Every time your agent reaches out to a database or an API, it’s another round trip across the bottleneck, adding unpredictability and “lag” to what should be a seamless interaction.

The Tax: Energy, Latency, and Scalability

The “Tax” isn’t just a metaphor; it’s a line item on your cloud bill.

- Energy: Moving data consumes orders of magnitude more power than calculating it.

- Latency: It’s why your agent feels “sluggish” during complex multi-step tasks.

- Scalability: We are throwing more GPUs at the problem, but we are essentially just adding more bricklayers to a site that still only has one pickup truck.

The Cloud Bill as Physical Weight

The How: Breaking the Bottleneck

To move into a true Generative Era, we have to stop paying the tax. We are seeing the rise of Non-Von Neumann designs:

- Near-Memory Computing: Bringing the “brain” to the “library.” By performing calculations directly where the data lives, we eliminate the commute entirely.

- Specialized Accelerators (TPUs/ASICs): These aren’t just faster processors; they are redesigned “cities” where memory and logic live next door to each other, optimized for the massive parallel math of AI.

- Algorithmic Pruning: If we can’t make the truck bigger, we have to make the bricks lighter. This is the push for smaller, specialized models that can fit “on-chip.”

The New Architecture

We are at a crossroads. We can continue to brute-force 1940s architecture to run 21st-century intelligence, or we can admit that the “Tax” is too high. The future of Agentic AI isn’t just better software; it’s hardware that finally learns to get out of its own way.

Coming Up Next…

Now that we’ve looked at the hardware “traffic jam,” it’s time to look at the “Language” we’re sending across it.

How are we communicating with the AI?

Join me for Part 3: Decluttering the Interface, where we’ll ask the hard question: If the machine is finally talking back, why are we still speaking in 3rd-Generation Languages? It’s time to fire the “Middleman.”